Month: October 2023

-

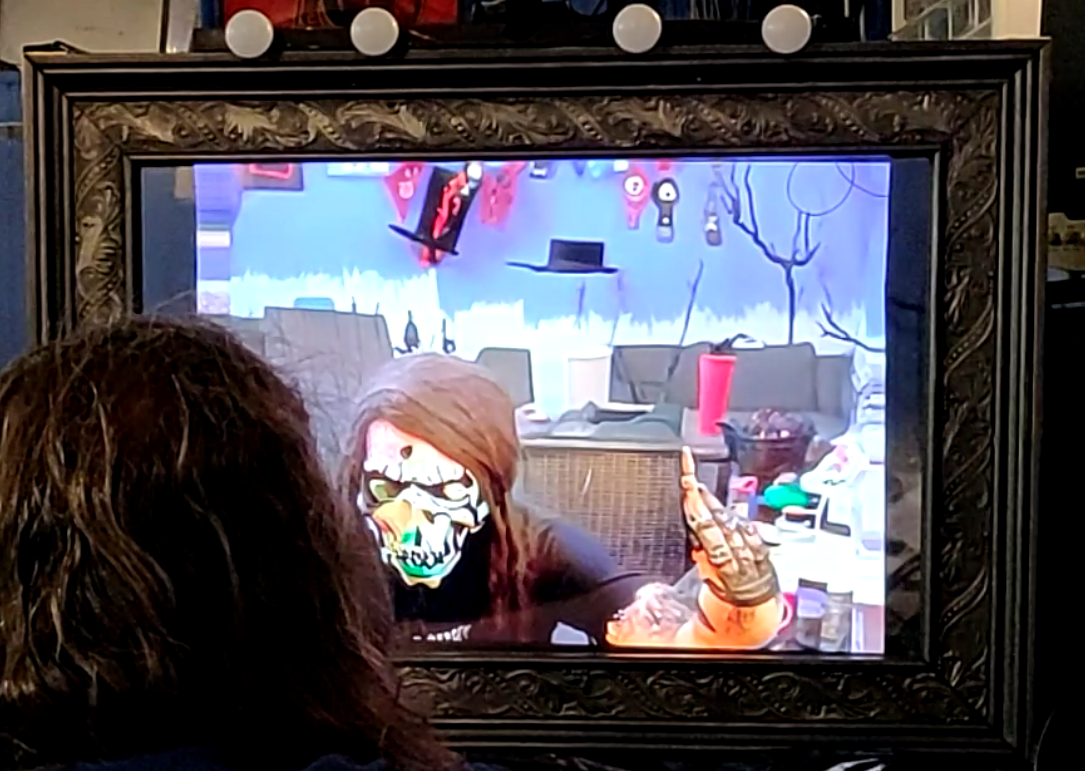

Spookifying Haunted Mirror build using Stable Diffusion

For this Halloween, I built this haunted mirror display for the porch that turns any trick-or-treaters extra spooky. Using the voodoo power of AI, those who gaze into the mirror will be treated to a visage of their best Halloween self. See below for code and build tips if you’re interested in making your own!…